Your AI Project Just Became an Organisational Design Problem

Nobody puts it in the project charter. It doesn't appear in the vendor proposal. It certainly doesn't come up in the demo.

Nobody puts it in the project charter. It doesn't appear in the vendor proposal. It certainly doesn't come up in the demo.

But at some point in almost every contact centre AI implementation, the project stops being about technology and starts being about something much harder.

Who owns the customer?

The Contact Centre Has Always Sat at the Intersection

Think about what your contact centre actually does.

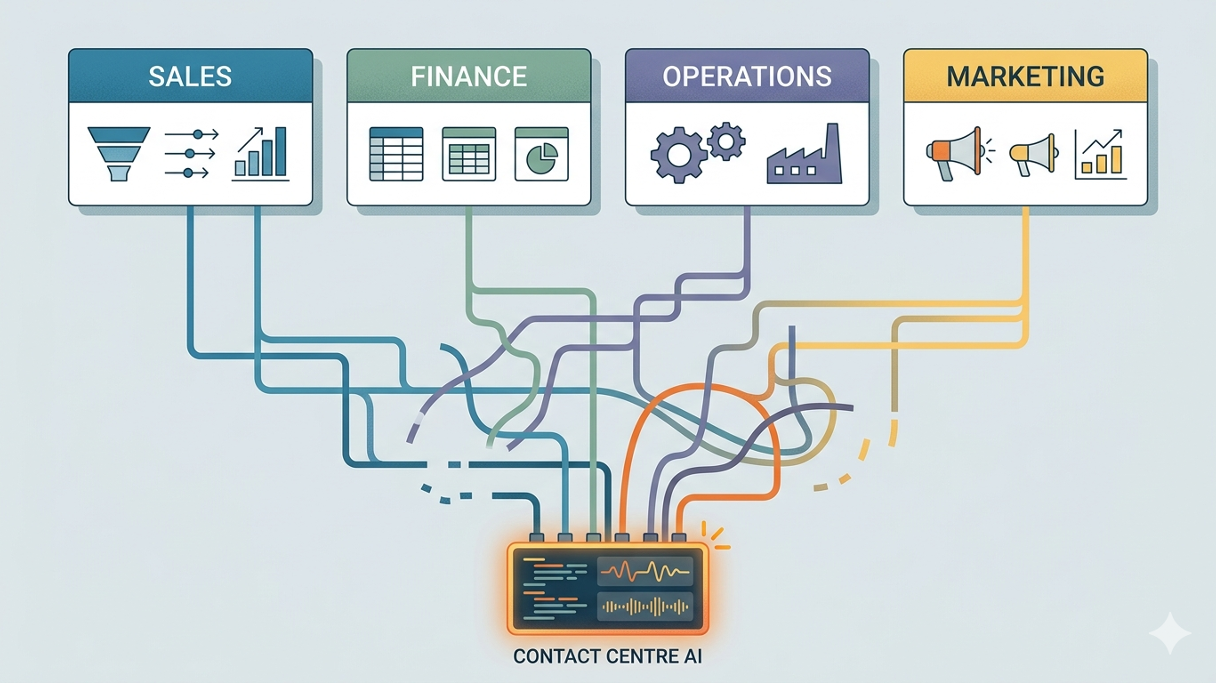

It's the place where every part of your organisation meets the customer in real time. Billing disputes. Delivery problems. Product questions. Account changes. Complaints that started in one channel and escalated through three others.

Your agents handle all of it. And to handle it, they need information from systems they don't own — CRM records from Sales, billing data from Finance, order status from Operations, case history from whatever system the last team implemented.

They've been doing this for years. Reaching across organisational boundaries, pulling data from systems designed for other teams, reconciling inconsistencies on the fly, and making judgment calls that nobody ever documented.

It works. Mostly. Because humans are extraordinarily good at navigating ambiguity.

AI is not.

What Silos Actually Look Like in Practice

Organisational silos aren't usually deliberate. They accumulate the same way technology stacks do — incrementally, rationally, one decision at a time.

Sales implements a CRM to manage the pipeline. Finance builds out a billing platform to manage revenue. Operations runs its own order management system. Marketing owns the customer data platform. Each team chose tools that fit their needs, built processes around them, and got very good at working within their own boundaries.

The contact centre sits downstream of all of it. Agents became expert at translating between these worlds — knowing which system to check first, which record to trust when two systems disagreed, which team to call when something didn't add up.

That translation layer is invisible. It lives in the heads of your experienced agents. It hasn't been documented because it wasn't necessary.

Until now.

AI Inherits Your Org Chart

When you introduce an AI agent into this environment, something becomes immediately apparent.

The AI has no institutional memory. No informal network. No ability to call the billing team and ask why this record looks wrong. It works with what it can access — and what it can access is shaped entirely by your organisational structure.

If your CRM and billing platform don't share a consistent customer identifier, the AI can't reconcile them. If your order management system updates on a nightly batch rather than in real time, the AI will tell customers things that were true yesterday but aren't true now. If your knowledge base is owned by a team that hasn't reviewed it since the last product update, the AI will confidently surface the wrong answer.

Every silo in your organisation has a data boundary. AI hits every one of them.

And here's the difficult part. These aren't technology problems. The technology could connect these systems tomorrow. The problem is that nobody has ever had sufficient urgency — or sufficient authority — to make the teams that own each system align around a shared view of the customer.

Until the AI project makes it unavoidable.

The Forcing Function Nobody Asked For

This is where the AI project becomes genuinely uncomfortable — and genuinely valuable.

Because for the first time, the organisational design problem has a hard deadline attached to it. The AI goes live in six months. For it to work, someone needs to answer questions that have been deferred for years.

Which system is the source of truth for customer identity? Who resolves it when two systems disagree? Who owns the process when data is wrong? Who is accountable when the AI surfaces outdated information because a team didn't update their system?

These questions don't have technology answers. They have governance answers.

Getting AI ready means getting your organisation ready first. That requires executive sponsorship, cross-functional accountability, and a willingness to have conversations about data ownership that most organisations have been successfully avoiding for years.

What Organisational Alignment Actually Requires

The Foundation First framework starts here — before data quality, before system documentation, before platform selection — because none of those things can be resolved without this one.

Map your data ownership. For every system that touches customer data, who owns it? Who is accountable for its accuracy? Who decides when it changes? If these questions don't have clear answers, you have your first project.

Identify your boundary crossings. Where does your contact centre currently rely on data it doesn't own? Those are your dependency points — and your AI's potential failure points. Each one needs a conversation with the team on the other side.

Define your source of truth hierarchy. When two systems disagree about a customer, which one wins? This sounds simple. It almost never is. But it needs a documented answer before AI can make a reliable decision.

Build cross-functional accountability. AI readiness isn't a contact centre project. It's an organisational project led by the contact centre. That distinction matters for resourcing, governance, and the escalation path when teams don't align.

Document the informal. Every workaround your agents run, every system quirk they navigate, every inconsistency they compensate for — that institutional knowledge needs to be captured and addressed before the AI inherits the environment it describes.

The Conversation That Needs to Happen at the Top

None of this can be resolved at an operational level. Data ownership, cross-functional accountability, and source-of-truth decisions — these require executive alignment and organisational will.

The good news is that the AI project gives you the most compelling case for that conversation you've ever had.

Not "we should fix our data silos" — which has been on the list for years and never made it to the top.

But: "Our AI implementation will fail — and our investment will be wasted — unless we resolve these organisational dependencies first."

That's a different conversation. And it tends to get different results.

Foundation First: Pillar One

Organisational alignment isn't the glamorous part of an AI project. It doesn't feature in the demo. The vendor won't scope it for you. But it's the first question the Foundation First framework asks — because everything else depends on the answer.

Do your teams share data, or do they hoard it?

If you don't know the answer, that's where the work starts.

Next: when your teams are aligned, the second question is whether your systems can actually tell the truth about a customer — consistently, verifiably, in real time.

Paul Wilson Co-founder, Canzuki | Vendor-agnostic CX consulting across NZ & AU | Problem first. Platform last.